Assessment integrity you can defend — and actually use.

PlayAblo’s AI Proctoring is an assessment governance layer: identity validation, configurable risk logic, and evidence-backed supervisor review — designed for regulated certification programs and internal skill validations.

Outcomes leaders care about.

Hybrid positioning: certification-grade integrity + internal fairness at scale.

From “monitoring” to assessment governance.

The goal isn’t surveillance. It’s predictable policy enforcement with reviewable evidence — so your results remain credible and can be used safely in Skills Intelligence decisioning.

- Live invigilators or rigid monitoring rules

- Subjective decisions; limited proof trail

- Hard to scale cost-effectively

- Disputes are difficult to resolve

- Assessment data is weak for talent decisions

- Assessment-level activation (policy-based)

- Identity validation before attempts

- Configurable Red/Amber/Green logic

- Evidence capture for supervisor review

- Trustworthy signals for Skills Intelligence

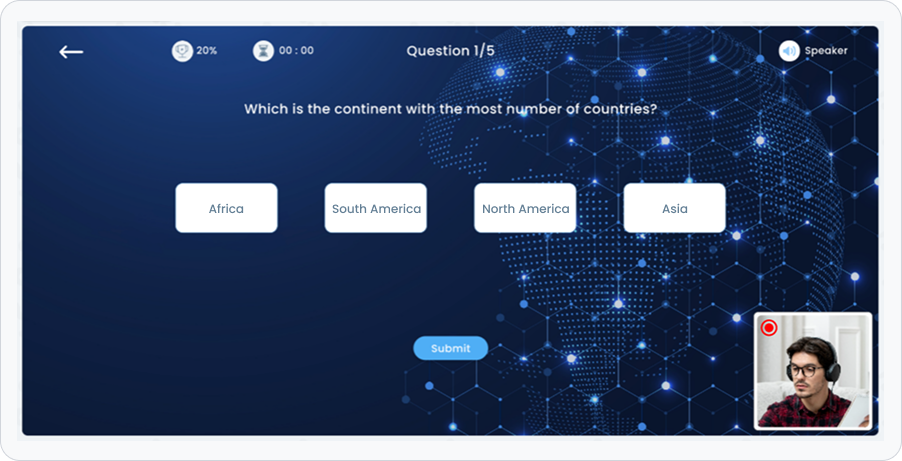

How it works — simplified.

A consistent workflow from activation to evidence-backed review.

- Turn on “Requires AI Proctoring” at the assessment level

- Use selectively: high-stakes modules or certification gates

- Keep low-stakes quizzes friction-light

- Camera + mic checks and environment readiness

- Profile photo capture and face match before start

- Mismatch flows to retry or supervisor policy action

- Face presence, audio, tab switching, copy/paste patterns

- Configurable thresholds for different risk levels

- Signals are logged, not “instantly punished” by default

- Dashboard filters by location, dept, course, severity

- Review evidence: images/snaps/logs per incident

- Actions are governed by span-of-control and policy

Selective activation — reduce friction where it doesn’t matter.

Apply proctoring only for high-stakes moments: certification checkpoints, role-readiness validations, or final assessments that feed Skills Intelligence dashboards.

Identity assurance before the assessment begins.

Prevent impersonation and reduce disputes with pre-test validation: device readiness, profile photo capture, and face match before a high-stakes attempt is allowed.

Risk-based flag hierarchy.

Not every signal should end an attempt. PlayAblo classifies behaviors into a severity model — so policy is applied consistently.

- Impersonation / mismatch

- No face detected beyond threshold

- Multiple faces detected

- Audio detected

- Frequent head movement

- Tab switching / focus change

- Copy/paste patterns

- Random captures

- Periodic evidence snapshots

- Baseline attempt telemetry

Human review where it matters.

Supervisors get a structured view: severity, timelines, evidence, and audit trails. Decisions remain governed by span-of-control — designed for enterprises, not ad-hoc policing.

Governance & control

Proctoring must match policy. Configure thresholds, define actions, and retain auditable histories to satisfy compliance and internal fairness expectations.

Feature highlights

Browse key capabilities — built for governance and scale.

FAQs

Common executive and governance questions.

Make assessments trustworthy — for compliance and capability decisions

Run certification-grade integrity, maintain internal fairness, and feed higher-confidence evidence into Skills Intelligence — with governance built in.